NATO, Russia, and Empathy: Modern Lessons from a Cold War Military Exercise

In

Log in if you are already registered

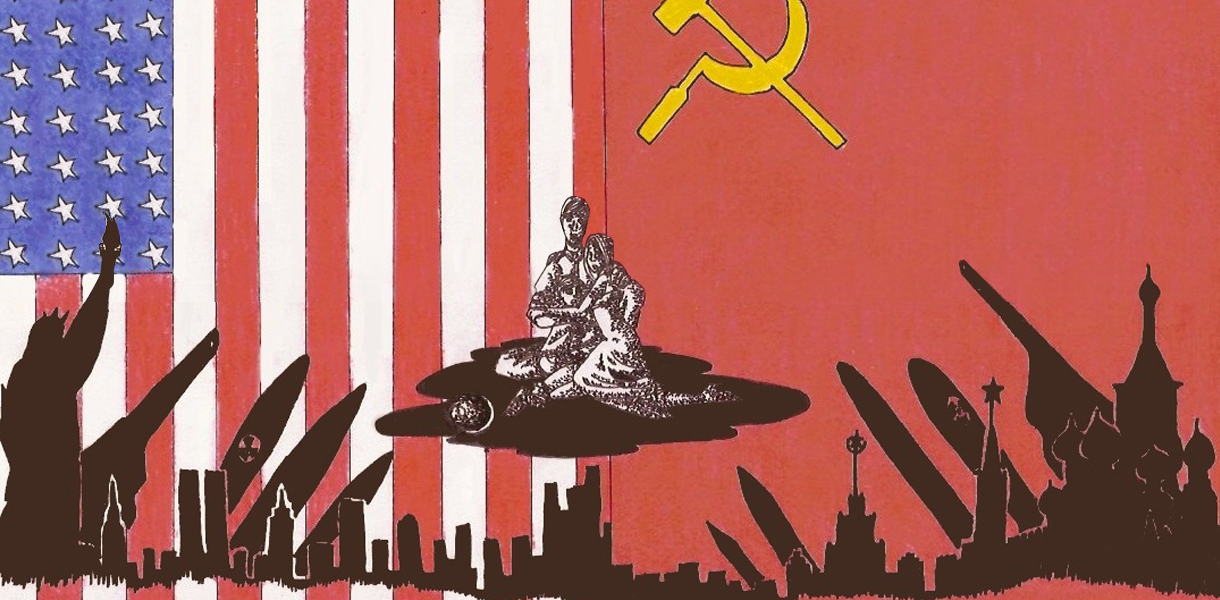

In November 1983 the Soviet Union began to increase the combat readiness of its forces in Eastern Europe, including the air force forward-deployed in East Germany, in preparation to meet an expected pre-emptive strike by the United States and its allies.

The cause of this anxiety was the 1983 Able Archer NATO military exercise, an unusually large affair that focused on concentrating major formations of allied units in Western Europe in order to fight a combined arms operation, inclusive of tactical nuclear weapons, against the Warsaw Pact.

The series of events leading up to and including this exercise highlight multiple, highly serious intelligence failures by both sides. A recently declassified report from the President’s Foreign Intelligence Advisory Board, written in 1990 following a thorough investigation into the resulting ‘war scare’, brings to light deeply worrying misinterpretations of the other’s actions. Many of these same failures are all too apparent in the ongoing NATO-Russia confrontation, in particular the lack of empathy and critical self-assessment.

Taking place as it did in a period of particular hostility between the USA and USSR, effective strategic communication relaying the scope and explicit purpose of Able Archer should have been paramount. Instead the report reveals that the US intelligence community routinely dismissed the concerns raised by the Soviet leadership relating to the military build-up in Western Europe as ‘propaganda’. Furthermore the warfighting preparation initiated by the USSR in response to Able Archer was not identified as such, with no correlation drawn between the increased readiness of forward-deployed Soviet units and NATO military activity. Even more concerning was that when no intelligence on a particular unit’s operational readiness was available it was routinely assumed to have remained at the same level as the last assessment. As the Board makes clear a preconception among western intelligence agencies that all was well was “defended by explaining away facts inconsistent with [this view] and by failing to subject that interpretation to a comparative risk assessment.” It was only due to the “instinctual” restraint shown by local commanders, bereft of effective strategic intelligence input, that NATO units did not respond to the increasing of readiness of Soviet units more aggressively, and thus avoided a potentially catastrophic escalation. At the highest level this consistent misreading of the Soviet leadership’s concerns resulted in the President making diplomatic decisions based upon assessments that understated the risks to the United States and its allies.

It is clear from the Able Archer report that the US intelligence community in 1983/84 was too rigid in sticking to its assumptions to effectively respond to events as they unfolded. The Soviet concern that the US was preparing to utilise its increasing superiority by launching a first strike against the Warsaw Pact had by 1983 already been reflected in significant modifications to the Soviet force posture. This included, among others, the forward-deployment of special operations forces, increasing the readiness of ballistic missile submarines (lower launch times; more time spent at sea), improving the combat readiness of drafted personnel, [1] and a diplomatic provision that permitted the USSR to unilaterally commit the forces of its Warsaw Pact allies to war. These developments took place against the backdrop of the much more widely publicised deployment, first by the USSR and then by the USA, of intermediate range, nuclear capable, missiles to Europe.

The report also highlights failings in the Soviet process that led to its partial mobilisation. Exacerbated by a deep mistrust and lack of understanding of the Reagan administration, policy makers in Moscow had become increasingly reliant on an intelligence interpretation algorithm known as VRYAN (from the Russian acronym for ‘Surprise Nuclear Missile Attack’). In essence VRYAN was a computerised system, created in 1979, that measured as a numerical value the relative cumulative power of the USSR and the USA. This ascribed the USA with a value of 100, with the USSR noted as a percentage of the USA. This model determined that if the relative Soviet value dropped to 40 or below then the USA would have enough of an advantage to launch a pre-emptive, disarming strike, it thus followed that the Soviet Union should itself launch such a strike whilst it still could. Determining this relative value relied on the input of c.40,000 factors which were centred around the Soviet perception of those political, economic, and military factors that proved key to victory in the Second World War. KGB residencies around the world were responsible for collecting these inputs and forwarding them to the central analysis centre in Moscow. Whilst VRYAN allowed for the collation of large quantities of data and arguably presented it in a useable form faster than conventional analysis, the lack of proper qualitative analysis and the sensitivity to nuance that this provides distorted leadership perception. This distortion resulted in criticism that VRYAN, and thus those leadership figures accessing its product, was/were predisposed to assume a greater risk of conflict. This particular criticism proved crucial in the discontinuation of VRYAN in 1985 following the Able Archer war scare.

It is unfortunately the case that many of the mistakes that led to the 1983/84 war scare are being repeated today. Failure by the analytical community to adopt a self-critical perpetual beta [2] approach to their predictions, combined with a predilection to dismiss pronounced Russian or NATO concerns as propaganda or as an extended form of multidimensional warfare without first subjecting them to an empathetic review, are real inhibitors to establishing an effective interpretation of events.

The failure of the intelligence communities on both sides in 1983/84 to correctly interpret the actions of the other is in many ways mirrored by the unnecessary ambiguity in modern NATO-Russia relations. The shuttering of information sharing mechanisms following the Russian intervention in Ukraine play a fundamental role in this, however the deep lack of empathy between NATO member states and Russia is arguably a bigger hindrance to determining effective policy. An April 2016 study by the European Leadership Network found that the fundamental base interpretation of world events by Russian and western leaders and populations differs not just due to variable political expediency, but due to sincere differences of interpretation of international law and norms.

Neither side has made a concerted effort to address this impasse, with both accusing the other of a cynical and wilful misinterpretation of international law and norms, or at worse simply dismissing the other’s case as propaganda. This cognitive block, the inability to empathise with the other, remains just as serious an inhibitor to effective intelligence analysis and policy making in 2016 as it did in 1983. What is not acknowledged is that empathy is not synonymous with sympathy. [3] Attempting to develop an understanding of another’s perspective does not necessarily denote agreement with that perspective or actions resulting from it. The failure to distinguish this concept is readily apparent in the public debate on Russia policy today, in which efforts to explain the process by which the Russian administration has acted are all too often dismissed as a modern form of appeasement.

This failure to think critically and empathetically has serious ramifications. Whilst it will never be possible to predict with absolute certainty the behaviour of any international actor, developing a well-grounded understanding of how they perceive the world and the events that have shaped it is a crucial foundation.

The report by the Foreign Intelligence Advisory Board also serves to highlight another fundamental problem with intelligence analysis that persists, namely the lack of critical self-evaluation by the intelligence community following the failure to properly interpret Soviet actions preceding and during Able Archer. The Board documents a period of lacklustre self-evaluation between the years 1984 and 1990 in which the most critical voices are dismissed, before outlining possible modifications.

This lack of institutionalised critical assessment and improvement is a theme that has been developed substantially by Philip Tetlock and Dan Gardner of the Good Judgement Project. [4] Tetlock and Gardner criticise the lack of self-assessment within the analytical community, encompassing the political predictions made by think tankers, journalists and professional intelligence analysts. This criticism is based in the lack of a methodology, or indeed a desire, to hold predictions accountable to events as they unfold in reality.

Vague analysis is a particular concern. This is evident in the use of such subjective verbiage as ‘likely’ or ‘probable’. Such terms do not provide an effective base from which to make policy decisions and do not lend themselves to retrospective evaluation. The Good Judgement Project instead champions the use of a percentage figure to denote probability. This not only provides a much clearer position from which to make a decision (whilst intelligence agencies increasing make use of this format it is still worryingly absent from public analysis and political debate) it is also easier to retrospectively evaluate. Most importantly however, it allows for incremental modification as events develop; for example increasing the probability of an event occurring from 43% to 44% following the release of new data. This continually modified approach is qualitative in nature, with the best results garnered through collective (wisdom of the crowd through determining the mean) judgement among analysts. [5] This concept of constant development, or perpetual beta, is crucial for effective analysis.

It is of the utmost importance that the mistakes of Able Archer are not repeated. At worst a lack of rigour in intelligence analysis and presentation can distort vital national decisions relating to war and peace, as is now painfully clear following the release of the inquiry report into the United Kingdom’s decision to go to war with Iraq in 2003.

Even on a theoretical planning level inaccurate analysis is dangerous. For example, the simulations that United States Strategic Command conducts in order to develop the USA’s deterrence policy rely on detailed profiles of possible adversaries. If these profiles are based on inaccurate analysis then any conclusions drawn from these exercises, included in deterrence procedure, and, ultimately, presented to the President at a time of national crisis, are fundamentally flawed.

Russia – NATO relations are currently in flux, a cycle characterised by mutual recriminations of past and current behaviour and interlinked modifications of military force posture on a scale not seen since the early 1990s. In such a rapidly changing environment the analytical margin of error is thin. It is thus imperative to draw on the lessons of the past and adopt a more empathetic, perpetual beta approach in an effort to avoid miscalculation and escalation. This will not end the current confrontation, but presenting a clearer, probability-based analysis as clear as possible from prejudice to our political leaders is an important start.

1 These measures included recalling reservists, lengthening service times, increasing draft ages, abolishing many draft deferments, and ending military support for the gathering of the harvest.

2 Perpetual beta, originally a software development term, suggests that a project or concept can never be definitively completed. It thus remains in constant development.

3 It is worthy of note that the Russian noun ‘empatiya’ (эмпатия) has only recently been adopted from English. The definition remains similar but may imply ‘sostradaniye’ (сострадание - compassion) or ‘sochuvstviye’ (сочувствие - sympathy), as is the case with an older translation of empathy, ‘sopyeryeshivaniye’ (сопереживание). This convoluted translation process will unfortunately complicate the adoption of this process in Russia.

4 See Tetlock and Gardner (2016), Superforcasting: The Art and Science of Prediction, Random House Books

5 The Good Judgement Project further improves its forecasting results by forming teams of ‘Superforecasters’, those analysts that have consistently produced the most accurate forecasts. These teams consistently outperformed the US intelligence community.

First published: European Leadership Network